Boek/BauerKaltenbock

< Boek| This text is a reprint of Linked Open Data: The Essentials - A Quick Start Guide for Decision Makers by Florian Bauer (REEEP) and Martin Kaltenböck (Semantic Web Company). The full book is available as PDF file at: http://www.semantic-web.at/LOD-TheEssentials.pdf |

Linked Open Data – The Essentials

By Florian Bauer (REEEP) and Martin Kaltenböck

From Open Data to Linked Open Data

[bewerken]

A brief history and factbook of Open Government, Open (Government) Data & Linked Open Data

[bewerken]

This introductory chapter will describe the principles of linking data; define important terms such as Open Government, Open (Government) Data and Linked Open (Government) Data; and explain relevant mechanisms to ensure a solid foundation before going more in-depth. Each subsequent chapter explains a specific topic and suggests additional resources, such as books and websites, for gaining more detailed insights on a particular theme. We hope that by introducing you to the possibilities of Linked Open Data (LOD), you will be able to share our vision of the future semantic web.

Open Government & Open (Government) Data

[bewerken]

When we talk about Open Government today, we refer to a movement that was initiated by “The Memorandum on Transparency and Open Government” ([1] The Transparency Directive), a memorandum signed by US President Barack Obama shortly after his inauguration in January 2009. The basic idea of Open Government is to establish a modern cooperation among politicians, public administration, industry and private citizens by enabling more transparency, democracy, participation and collaboration. In European countries, Open Government is often viewed as a natural companion to e-government [2].

An important excerpt of the memorandum reads: “My Administration is committed to creating an unprecedented level of openness in Government. We will work together to ensure the public trust and establish a system of transparency, public participation, and collaboration. Openness will strengthen our democracy and promote efficiency and effectiveness in Government.”

The Open Government partnership [3]was launched on September 20, 2011, when the eight founding governments (Brazil, Indonesia, Mexico, Norway, Philippines, South Africa, United Kingdom, United States) endorsed an Open Government declaration, announced their countries’ action plans, and welcomed the commitment of 38 governments to join the partnership. In September 2011, there were 46 national government commitments to Open Government worldwide.

Some of the most important enablers for Open Government are free access to information and the possibility to freely use and re-use this information (e.g. data, content, etc). After all, without information it is not possible to establish a culture of collaboration and participation among the relevant stakeholders. Therefore, Open Government Data (OGD) is often seen as a crucial aspect of Open Government.

OGD is a worldwide movement to open up government/public administration data, information and content to both human and machine-readable non-proprietary formats for re-use by civil society, economy, media and academia as well as by politicians and public administrators. This would apply only to data and information produced or commissioned by government or government-controlled entities and is not related to data on individuals.

Being open means lowering barriers to ensure the widest possible re-use by anyone. With OGD, a new paradigm came into being for publishing government data that invites everyone to look, take and play!

The often-used term “Open Data” refers to data and information beyond just governmental institutions and includes those from other relevant stakeholder groups such as business/industry, citizens, NPOs and NGOs, science or education.

Some of the best-known institutions currently undertake Open Data activities include the World Bank [4], the United Nations [5], REEEP [6], the New York Times [7], The Guardian [8] and the Open Knowledge Foundation (OKFN) [9].

In 2007, 30 Open Government advocates came together in Sebastopol, California, USA to develop a set of OGD principles [10] that underscored why OGD is essential for democracy. In 2010, the Sunlight Foundation [11] expanded these to 10 principles. Even though these principles are neither set in stone nor legally binding, they are widely considered by the global open (government) data community as general guidelines on the topic.

Government Data shall be considered “open” if the data is made public in a way that complies with the principles below:

- Data must be complete.

All public data is made available. The term „data“ refers to electronically-stored information or recordings, including but not limited to documents, databases, transcripts, and audio/visual recordings. Public data is data that is not subject to valid privacy, security or privilege limitations, as governed by other statutes. - Data must be primary.

Data is published as collected at the source, with the finest possible level of granularity, and not in aggregate or modified forms. - Data must be timely.

Data is made available as quickly as necessary to preserve the value of the data. - Data must be accessible.

Data is available to the widest range of users for the widest range of purposes. - Data must be machine-processable.

Data is structured so that it can be processed in an automated way. - Access must be non-discriminatory.

Data is available to anyone, with no registration requirement. - Data formats must be non-proprietary.

Data is available in a format over which no entity has exclusive control. - Data must be license-free.

Data is not subject to any copyright, patent, trademark or trade secrets regulation. Reasonable privacy, security and privilege restrictions may be allowed as governed by other statutes. Compliance to these principles must be reviewable through the following means:- A contact person must be designated to respond to people trying to use the data; or

- A contact person must be designated to respond to complaints about violations of the principles; or

- An administrative or judicial court must have the jurisdiction to review whether the agency has applied these principles appropriately.

The two principles added by the Sunlight Foundation are as follows:

- permanence.

Permanence refers to the capability of finding information over time. - usage costs.

One of the greatest barriers to access to ostensibly publicly available information is the cost imposed on the public for access – even when the cost is de minimus.

It has been acknowledged that the worldwide OGD movement originated in Australia, New Zealand, Europe and North America, but today we also see strong OGD engagement and activity in Asia, South America and Africa. For example, Kenya started Africa’s first data portal [12] in July 2011.

The European Commission (EC) has also put the issue high up on its agenda and is actively pushing OGD forward in Europe. Neelie Kroes, Vice-President of the European Commission responsible for the Digital Agenda, has stated strong commitment to OGD through her announcement [of an EC data portal by early 2012 and for a Pan-European data portal acting as a single point of access for all European national data portals by 2013. Open Data is an important part of both the Digital Agenda for Europe [13] and the European e-government Action Plan 2011–2015 [14]. In December 2011 the EC furthermore announced its Open Data Strategy for Europe: Turning Government Data into Gold [15].

The current leading countries in national Open Data activities and initiatives are definitely the governments of the United States of America [16], Australia [17], the Scandinavian countries and the UK government [18]. All of these countries have a high political commitment to both Open Data and central Open Data portals, and they all have a strong Open Data community. These innovative countries and the people behind them can be considered the pioneers of OGD.

Two very good resources about the worldwide OGD movement are:

- SWC world map of Open Data initiatives, activities and portals: http://bit.ly/open-data-map

- OKFN comprehensive list of data catalogs curated by experts from around the world: http://datacatalogs.org

For a good example of a national OGD process, please refer to the following “UK Open Government Data Timeline” by Tim Davies:

Links

[bewerken]

- [1] The Memorandum on Transparency and Open Government: http://www.whitehouse.gov/the_press_office/TransparencyandOpenGovernment

- [2] e-government, Wikipedia: http://en.wikipedia.org/wiki/EGovernment

- [3] Open Government Partnership: http://www.opengovpartnership.org

- [4] Open Data World Bank: http://data.un.org

- [5] Open Data United Nations: http://data.worldbank.org

- [6] Open Data REEEP: http://data.reegle.info

- [7] Open Data New York Times: http://data.nytimes.com

- [8] Open Data The Guardian: http://www.guardian.co.uk/worldgovernment-data

- [9] Open Knowledge Foundation: http://okfn.org

- [10] 8 Principles of Open Government Data: http://www.opengovdata.org/home/8principles

- [11] Sunlight Foundation: 10 principles of Open Government Data: http://sunlightfoundation.com/policy/documents/ten-open-dataprinciples

- [12] Kenya Open Data Portal: http://opendata.go.ke

- [13] Digital Agenda for Europe: http://ec.europa.eu/information_society/digital-agenda

- [14] eGovernment Action Plan Europe 2011–2015: ec.europa.eu/information_society/activities/egovernment/action_plan_2011_2015

- [15] Announcement: Open Data Strategy for Europe: http://bit.ly/s5FiQo

- [16] Open Data Catalogue United States of America: http://data.gov

- [17] Open Data Catalogue of Australia: http://data.gov.au

- [18] Open Data Catalogue United Kingdom: http://data.gov.uk

Further Reading

[bewerken]

- Open Government, Wikipedia: http://en.wikipedia.org/wiki/Open_government

- Open Knowledge Foundation, OGD website: http://opengovernmentdata.org

- Open Data, Wikipedia: http://en.wikipedia.org/wiki/Open_data

- Open Knowledge Foundation Blog: http://blog.okfn.org

Putting the L in Front: From Open Data to Linked Open Data

[bewerken]

As mentioned above, OGD is all about opening up information and data, as well as making it possible to use and re-use it. An OGD requirements analysis was conducted in June 2011 in Austria and highlights the following eleven areas to consider when thinking about OGD:

- Need for definitions

- Open Government: transparency, democracy, participation and collaboration

- Legal issues

- Impact on society

- Innovation and knowledge society

- Impact on economy and industry

- Licenses, models for exploitation, terms of use

- Data relevant aspects

- Data governance

- Applications and use cases

- Technological aspects

When considering how to fully benefit from OGD in concrete cases, it is clear that interoperability and standards are key. This is where LOD principles come into play.

To fully benefit from Open Data, it is crucial to put information and data into a context that creates new knowledge and enables powerful services and applications. As LOD facilitates innovation and knowledge creation from interlinked data, it is an important mechanism for information management and integration.

There are two equally important viewpoints to LOD: publishing and consuming. Throughout this guide, we will always address LOD from both the publishing and consumption perspectives.

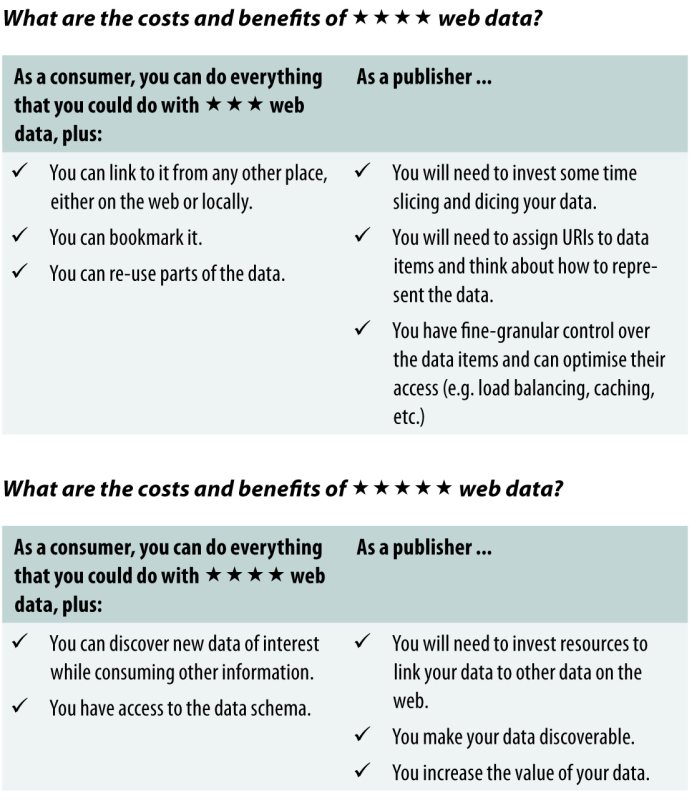

The path from open (government) data to linked open (government) data was best described by Sir Tim Berners-Lee [1] when he first presented his 5 Stars Model at the Gov 2.0 Expo in Washington DC in 2010. Since then, Berners-Lee‘s model has been adapted and explained in several ways; the following adaptation of the 5 Stars Model [2] by Michael Hausenblas [3] explains the costs and benefits for both publishers and consumers of LOD.

What are the costs and benefits of web data?

[bewerken]

LOD is becoming increasingly important in the fields of state-of-the-art information and data management. It is already being used by many well-known organisations, products and services to create portals, platforms, internet-based services and applications.

LOD is domain-independent and penetrates various areas and domains, thus proving its advantage over traditional data management. For example, the project LOD2 [4] Creating Knowledge Out of Interlinked Data, which is funded by the European Commission under the 7th Framework Programme, develops powerful LOD mechanisms and tools based on three real use cases: OGD, linked enterprise data and LOD for media and publishers. For further reading on linked open (government) data, please refer to the Government Linked Data (GLD) W3C working group [5].

The following chapters discuss the benefits of LOD, as well as basic LOD consuming and publishing principles for creating powerful and innovative services for knowledge management, decision making and general data management. The best practice examples reegle.info [6] and OpenEI [7] show how LOD can have a great impact on their respective target groups. Another popular example of applied OGD is legislation.gov.uk.

Links

[bewerken]

[1] Sir Tim Berners-Lee (Wikipedia): http://en.wikipedia.org/wiki/Tim_Berners-Lee

[2] 5 Stars Model on Open Government Data by Michael Hausenblas: http://lab.linkeddata.deri.ie/2010/star-scheme-by-example

[3] Michael Hausenblas: http://semanticweb.org/wiki/Michael_Hausenblas

[4] LOD2 – Creating Knowledge Out of Interlinked Data: http://www.lod2.eu

[5] GLD W3C Working Group: http://www.w3.org/2011/gld/charter

[6] Clean energy info portal reegle.info: http://www.reegle.info

[7] Open Energy Info (OpenEI): http://en.openei.org

Further reading

[bewerken]

- Linked Data, Wikipedia: http://en.wikipedia.org/wiki/Linked_data

- Linked Data – Connect Distributed Data Across the Web: http://linkeddata.org

- Linked Data: Evolving the Web into a Global Data Space, Heath and Bizer: http://linkeddatabook.com

- Linking Government Data, David Wood (Editor), Springer; 2011 edition (November 12, 2011), ISBN-10: 146141766X, ISBN-13:978-1461417668

- W3C Linking Open Data Community Project: http://www.w3.org/wiki/SweoIG/TaskForces/CommunityProjects/LinkingOpenData

The Power of Linked Open Data

[bewerken]

Understanding World Wide Web Consortium’s (W3C) [2] vision of a new web of data

Imagine that the web is like a giant global database. You want to build a new application that shows the correspondence among economic growth, renewable energy consumption, mortality rates and public spending for education. You also want to improve user experience with mechanisms like faceted browsing. You can already do all of this today, but you probably won’t. Today’s measures for integrating information from different sources, otherwise known as mashing data, are often too time-consuming and too costly.

Two driving factors can cause this unpleasant situation:

First of all, databases are still seen as „silos”, and people often do not want others to touch the database for which they are responsible. This way of thinking is based on some assumptions from the 1970s: that only a handful of experts are able to deal with databases and that only the IT department’s inner circle is able to understand the schema and the meaning of the data. This is obsolete. In today’s internet age, millions of developers are able to build valuable applications whenever they get interesting data.

Secondly, data is still locked up in certain applications. The technical problem with today’s most common information architecture is that metadata and schema information are not separated well from application logics. Data cannot be re-used as easily as it should be. If someone designs a database, he or she often knows the certain application to be built on top. If we stop emphasising which applications will use our data and focus instead on a meaningful description of the data itself, we will gain more momentum in the long run. At its core, Open Data means that the data is open to any kind of application and this can be achieved if we use open standards like RDF REF2 to describe metadata.

Linked Data?

[bewerken]

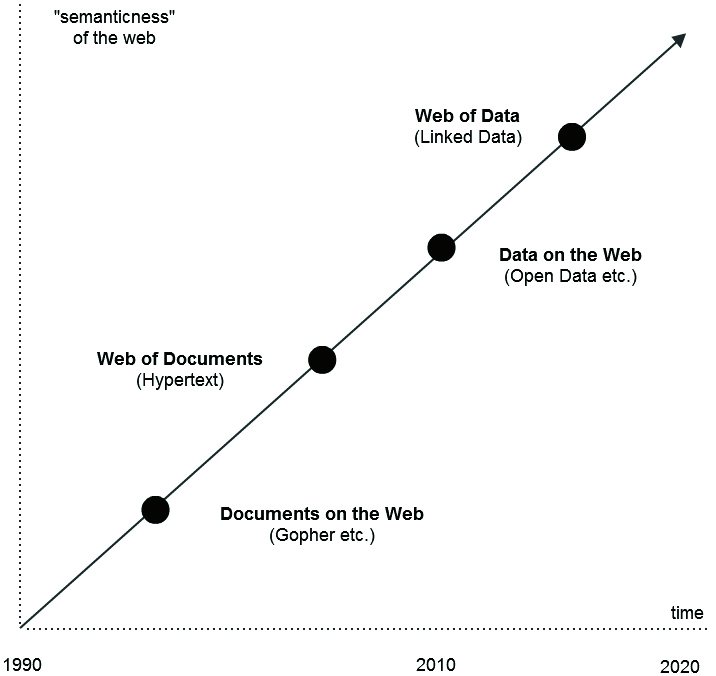

Nowadays, the idea of linking web pages by using hyperlinks is obvious, but this was a groundbreaking concept 20 years ago. We are in a similar situation today since many organizations do not understand the idea of publishing data on the web, let alone why data on the web should be linked. The evolution of the web can be seen as follows:

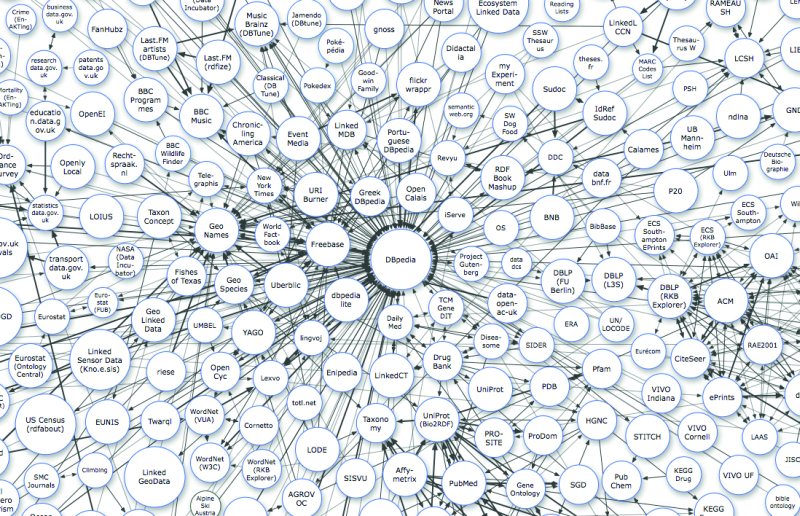

Although the idea of Linked Open Data (LOD) has yet to be recognised as mainstream (like the web we all know today), there are a lot of LOD already available. The so called LOD cloud REF3 covers more than an estimated 50 billion facts from many different domains like geography, media, biology, chemistry, economy, energy, etc. The data is of varying quality and most of it can also be re-used for commercial purposes.

Please see a current version of the LOD Cloud diagram of 2011 as follows:

Why should we link data on the web and how do we do it?

All of the different ways to publish information on the web are based on the idea that there is an audience out there that will make use of the published information, even if we are not sure who exactly it is and how they will use it. Here are some examples:

- Think of a twitter message: not only do you not know all of your followers, but you often don’t even know why they follow you and what they will do with your tweets.

- Think of your blog: it´s like an email to someone you don’t know yet.

- Think of your website: new people can contact you and offer new surprising kinds of information.

- Think of your email-address: you have shared it on the web and receive lots of spam since then.

In some ways, we are all open to the web, but not all of us know how to deal with this rather new way of thinking. Most often the „digital natives” and „digital immigrants” who have learned to work and live with the social web have developed the best strategies to make use of this kind of „openness.” Whereas the idea of Open Data is built on the concept of a social web, the idea of Linked Data is a descendant of the semantic web.

The basic idea of a semantic web is to provide cost-efficient ways to publish information in distributed environments. To reduce costs when it comes to transferring information among systems, standards play the most crucial role. Either the transmitter or the receiver has to convert or map its data into a structure so it can be „understood” by the receiver. This conversion or mapping must be done on at least three different levels: used syntax, schemas and vocabularies used to deliver meaningful information; it becomes even more time-consuming when information is provided by multiple systems. An ideal scenario would be a fully-harmonised internet where all of those layers are based on exactly one single standard, but the fact is that we face too many standards or „de-facto standards” today.

How can we overcome this chicken-and-egg problem? There are at least three possible answers:

- Provide valuable, agreed-upon information in a standard, open format.

- Provide mechanisms to link individual schemas and vocabularies in a way so that people can note if their ideas are “similar” and related, even if they are not exactly the same.

- Bring all this information to an environment which can be used by most, if not all of us. For example: don’t let users install proprietary software or lock them in one single social network or web application!

A brief history of LOD[bewerken]

Corresponding to the three points above, here are the steps already done by the LOD community:

- W3C has published a stack of open standards for the semantic web built on top of the so-called „Resource Description Framework“ (RDF). This widely-adopted standard for describing metadata was also used to publish the most popular encyclopedia in the world: Wikipedia now has its „semantic sister“ called DBpedia REF4, which became the LOD cloud’s nucleus.

- W3C´s semantic web standards also foresee the possibility to link data sets. For example, one can express in a machine-readable format that a certain resource is exactly (or closely) the same as another resource, and that both resources are somewhere on the web but not necessarily on the same server or published by the same author. This is very similar to linking resources to each other using hyperlinks within a document, and is the atomic unit for the giant global database previously mentioned.

- Semantic web standards are meant to be used in the most common IT infrastructure we know today: the worldwide web (WWW). Just use your browser and use HTTP! Most of the LOD cloud’s resources and the context information around them can be retrieved by using a simple browser and by typing a URL in the address bar. This also means that web applications can make use of Linked Data by standard web services.

Already reality – an example

Paste the following URL in your browser: http://dbpedia.org/resource/Renewable_Energy_and_Energy_Efficiency_Partnership and you will receive a lot of well structured facts about REEEP. Follow the fact that REEEP „is owner of” reegle (http://dbpedia.org/resource/Reegle) and so on and so forth. You can see that the giant global database is already a reality!

Complex systems and Linked Data

[bewerken]

Most systems today deal with huge amounts of information. All information is produced either within the system boundaries (and partly published to other systems) or it is consumed “from outside,” “mashed” and “digested” within the boundaries. Some of the growing complexity has been caused in a natural way due to a higher level of education and the technical improvements made by the ICT sector over the last 30 years. Simply said, humanity is now able to handle much more information than ever before with probably the lowest costs ever (think of higher bandwidths and lower costs of data storage).

However, most of the complexity we are struggling with is caused above all by structural insufficiencies due to the networked nature of our society. The specialist nature of many enterprises and experts is not yet mirrored well enough in the way we manage information and communicate. Instead of being findable and linked to other data, much information is still hidden.

With its clear focus on high-quality metadata management, Linked Data is key to overcoming this problem. The value of data increases each time it is being re-used and linked to another resource. Re-usage can only be triggered by providing information about the available information. In order to undertake this task in a sustainable manner, information must be recognised as an important resource that should be managed just like any other.

Examples for LOD applications[bewerken]

Linked Open Data is already widely available in several industries, including the following three:

- Linked Data in libraries [5]: focusing on library data exchange and the potential for creating globally interlinked library data; exchanging and jointly utilising data with non-library institutions; growing trust in the growing semantic web; and maintaining a global cultural graph of information that is both reliable and persistent.

- Linked Data in biomedicine REF6: establishing a set of principles for ontology/vocabulary development with the goal of creating a suite of orthogonal interoperable reference ontologies in the biomedical domain; tempering the explosive proliferation of data in the biomedical domain; creating a coordinated family of ontologies that are interoperable and logical; and incorporating accurate representations of biological reality.

- Linked government data: re-using public sector information (PSI); improving internal administrative processes by integrating data based on Linked Data; and interlinking government and nongovernment information.

The future of LOD[bewerken]

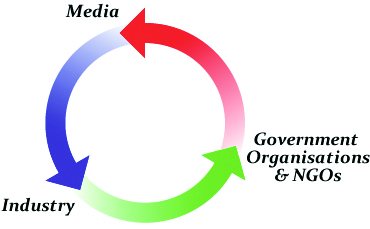

The inherent dynamics of Open Data produced and consumed by the “big three” stakeholder groups – media, industry, and government organizations/NGOs – will move forward the idea, quality and quantity of Linked Data – whether it is open or not:

Whereas most of the current momentum can be observed in the government & NGO sectors, more and more media companies are jumping on the bandwagon. Their assumption is that more and more industries will perceive Linked Data as a cost-efficient way to integrate data.

Linking information from different sources is key for further innovation. If data can be placed in a new context, more and more valuable applications – and therefore knowledge – will be generated.

Links

[bewerken]

[1] The World Wide Web Consortium (W3C): is an international community that develops open standards to ensure the long-term growth of the Web: http://www.w3.org

[2] Resource Description Framework (RDF): http://www.w3.org/RDF

[3] Linked Open Data Cloud: http://www.lod-cloud.net

[4] DBpedia: http://dbpedia.org

[5] Jan Hannemann, Jürgen Kett (German National Library): „Linked Data for Libraries“ (2010) http://www.ifla.org/files/hq/papers/ifla76/149-hannemann-en.pdf

(6) OBO Foundry: http://obofoundry.org

Linked Open Data Start Guide

[bewerken]

A quick guide for your own LOD strategy and appearance

The following two sections review LOD publication and consumption and provide the essential information for establishing a powerful LOD strategy for your own organisation. We also provide further reading recommendations for anyone seeking more technical details on LOD publishing and consumption, as well as a list of the most important software tools for publishing and consuming LOD.

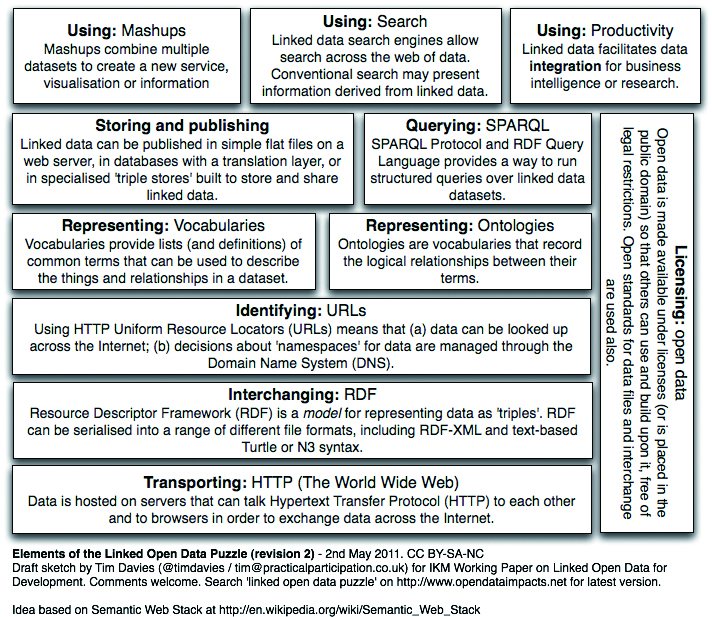

The following figure is a technical overview of the necessary blocks for building your strategy for LOD publishing and consumption.

Publishing Linked Open Data

[bewerken]

First steps for publishing your content as LOD

The idea, benefit and effort of publishing LOD have already been mentioned in the previous chapters of this publication, and were discussed along the 5 Star Model of OGD. Publishing Linked Open DataLOD provides a powerful mechanism for sharing your own data and information along with your metadata and the respective data models for efficient re-use. Going LOD helps your organisation to become an important data hub within your domain.

Quick guide for publishing LOD

[bewerken]

We have prepared a short guide for the most important issues that need to be taken into account when publishing LOD as well as a stepby-step model to get started.

Analyse your data

[bewerken]

Before you start publishing your data, it is crucial to take a deeper look at your data models, your metadata and the data itself. Get an overview and prepare a selection of data and information that is useful for publication.

Clean your data

[bewerken]

Data and information that comes from many distributed data sources and in several different formats (e.g. databases, XML, CSV, Geodata, etc.) require additional effort to ensure easy and efficient modelling. This includes ridding your data and information of any additional information that will not be included in your published data sets.

Model your data

[bewerken]

Choose established vocabularies and additional models to ensure smooth data conversion to RDF. The next step is to create unified resource identifiers (URIs) REF1 as names for each of your objects. To ensure sustainability, remember to develop data models for data that change over time.

Choose appropriate vocabularies

[bewerken]

There are lots of existing RDF vocabularies for re-use; please evaluate appropriate vocabularies for your data from existing ones. If there are no vocabularies that fit your needs, feel free to create your own.

Specify license(s)

[bewerken]

To ensure broad and efficient re-use of your data, evaluate, specify and provide a clear license for your data to avoid its re-use in a legal vacuum. If possible, specify an existing license that people already know. This enables interoperability with other data sets in the field of licensing. For example, Creative Commons REF2 is a commonly-used license for OGD.

Convert data to RDF

[bewerken]

One of the final steps is to convert your data to RDF REF3, a very powerful data model for LOD. RDF is an official W3C recommendation for semantic web data models. Remember to include your specified license(s) into your RDF files.

Link your data to other data

[bewerken]

Before you publish, make sure that your data is linked to other data sets; links to your other data sets and to third party data sets are useful. These links ensure optimised data processing and integration for data (re-)use and allow for the creation of new knowledge from your data sets by putting them into a new context with other data. Evaluate and choose carefully the most relevant data sets to be linked with your own.

Publish and promote your LOD

[bewerken]

Publish your data on the web and promote your new LOD sets to ensure wide re-use – even the best LOD will not be used if people cannot find it! Alongside other ways of promotion it is a great idea to add your LOD sets into the LOD cloud REF4, a visual presentation of LOD sets by providing and updating the meta-information about your data sets on the data hub REF5. Remember to always provide human-readable descriptions of your data sets to make the data sets „self-describing”

for easy and efficient re-use.

For a similar approach, we recommend the „Ingredients for high quality Linked (Open) Data” by the W3C Linked Data Cookbook REF6. The essential steps to publishing your own LOD are:

- Model and link the data

- Name things with URIs

- Re-use vocabularies whenever possible

- Publish human- and machine-readable descriptions

- Convert data to RDF

- Specify an appropriate license

- Announce the new Linked Data Set(s)

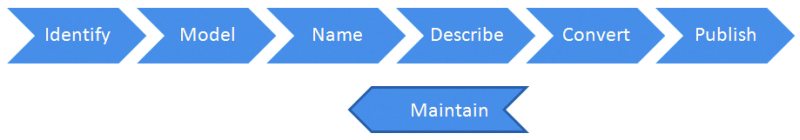

The following life cycle of Linked Open (Government) Data by Bernadette Hyland REF7 visualises the path for LOD publishing:

The Four Rules of Linked Data (W3C Design Issues for Linked Data REF8) are also a good place to start understanding LOD principles:

The semantic web isn‘t just about putting data on the web – that is the old „web of pages.” It is about making links, so that a person or machine can explore the semantically connected „web of data.” With Linked Data, you can find more related data.

Like the web of hypertext, the web of data is constructed with documents on the web. However, unlike the web of hypertext, where links are relationships anchors in hypertext documents written in HTML, LOD functions through links between arbitrary things described by RDF. The URIs identify any kind of object or concept, but regardless of HTML or RDF, the same expectations apply to make the web grow:

- Use URIs as names for things

- Use HTTP URIs so that people can look up those names.

- When someone looks up a URI, provide useful information, using the established standards (e.g. RDF, SPARQL)

- Include links to other URIs, so that more things can be discovered

Furthermore, it is crucial to provide high quality information for developers and data workers about your data. Provide information about data provenance as well as its data collection to guarantee smooth and efficient work with your data.

To ensure widest possible re-use, provide a (web) API REF9 on top of the published data sets that allows users to query your data and to fetch data and information from your data collection tailored to their needs. A web API enables web developers to easily work with your data.

Here are some best practice examples for publishing LOD:

- UK official National Open (Government) Data Portal – Linked Data Area: http://data.gov.uk/linked-data

- Official UK Legislation: http://www.legislation.gov.uk

- reegle.info LOD portal: http://data.reegle.info

- EU project: LATC – LOD around the clock: http://latc-project.eu

Links

[bewerken]

[1] Uniform Resource Identifier, URI on Wikipedia: http://en.wikipedia.org/wiki/Uniform_resource_identifier

[2] Creative Commons: http://creativecommons.org

[3] Resource Description Framework (RDF): http://www.w3.org/RDF/

RDF on Wikipedia: http://en.wikipedia.org/wiki/Resource_Description_Framework

[4] The LOD Cloud: http://richard.cyganiak.de/2007/10/lod

[5] The Data Hub (formerly CKAN): http://thedatahub.org

[6] W3C Linked (Open) Data Cookbook: http://www.w3.org/2011/gld/wiki/Linked_Data_Cookbook

[7] Bernadette Hyland: http://3roundstones.com/about-us/leadership-team/bernadette-hyland

[8] W3C Design Issues for Linked Data: http://www.w3.org/DesignIssues/LinkedData.html

[9] Web API: http://en.wikipedia.org/wiki/Web_API

or Web Service: http://en.wikipedia.org/wiki/Web_service

Further Reading

[bewerken]

- How to publish Linked Data on the Web, Bizer et al: http://www4.wiwiss.fu-berlin.de/bizer/pub/linkeddatatutorial

- Linked Data – Connect Distributed Data across the Web: http://linkeddata.org

- Linked Data: Evolving the Web into a Global Data Space, Heath and Bizer: http://linkeddatabook.com

- Designing URI Sets for the UK Public Sector: http://www.cabinetoffice.gov.uk/resource-library/designing-uri-sets-uk-public-sector

- Linked Data Patterns, Dodds & Davies: http://patterns.dataincubator.org/book/linked-data-patterns.pdf

- Linking Government Data, David Wood (Editor), Springer; 2011 edition (November 12, 2011), ISBN-10: 146141766X, ISBN-13:978-1461417668

Consuming Linked Open Data

[bewerken]

First steps for consuming content as LOD

Consuming LOD enables you to integrate and provide high quality information and data collections to mix your own data and third party information. These enriched data collections can act as single points of access for a specific domain in the form of a LOD portal and as an internal or Open Data warehouse system that enables better decision making, disaster management, knowledge management and/or market intelligence solutions.

Organisations can benefit and reach competitive advantage through the possibility to: 1) spontaneously generate dossiers and information mash ups from distributed information sources; create applications based on real time data with less replication; and 3) create new knowledge out of this interlinked data.

Quick guide for consuming LOD

[bewerken]

Here are the most important issues and milestones to consider when consuming LOD:

Specify concrete use cases

Always specify concrete (business) use cases for your new service or application. What is the concrete problem you would like to solve? What data is available internally and what will you need from third party sources?

Evaluate relevant data sources and data sets

Based on your concrete use case(s), the next step is to evaluate relevant LOD sources for data integration. Find out what data sources are available and what quality of data these third party sources offer (data quality is often associated with the information source itself; well known organisations usually provide high quality data and information). A very good approach for this evaluation is to use a LOD search engine like Sindice REF1 or one of the globally available Open Data catalogues such as The Data Hub REF2. Also consider data set update cycles and when the data was last updated.

Check the respective licenses

Evaluate the licenses for use and re-use provided by the owners of the data. Avoid using data where no clear and understandable license is available. If in doubt, contact the respective data holders and clarify these questions. It is also important to know what license these data sets provide for mashing up data sets with others.

Create consumption patterns

Creating consumption patterns specifies in detail exactly which data is re-used from a certain data source. Not all data in a set will be relevant to the specified use case(s), in which case you can develop consumption patterns that clearly specify only the relevant data in the set.

Manage alignment, caching and updating mechanisms

When LOD is consumed, the need for matching different vocabularies of the consumed (internal and external) data sets often occurs. This is relevant to ensure smooth data integration by vocabulary alignment REF3. Another concern is the fact that LOD sources are not absolutely stable nor always available to consume data in real time. To prevent a specific data set from being unavailable at a certain time, create caching mechanisms for specific third party data and information. Another important issue is to consume up-to-date information; a feasible approach here is to implement updating mechanisms for LOD consumption. Please see the „Linked Open Data Tool Box Collection” at the end of this chapter for more information.

Create mash ups, GUIs, services and applications on top

To serve your users and to create powerful LOD applications or services on top of mashed up LOD, it is crucial to provide user-friendly graphical user interfaces (GUIs) and powerful services for end users.

Establish sustainable new partnerships

When using third party data and information, contact the data providers to build new partnerships and offer your own data for vice versa use.

As a conclusion, please consider some best practice examples for consuming LOD from these LOD players:

- UK Organograms: http://data.gov.uk/organogram/hm-treasury

- reegle.info country profiles: http://www.reegle.info/countries

- EU project: LATC – Linked Open Data Around-The-Clock: http://latc-project.eu

Links

[bewerken]

[1] Sindice – the semantic web index: http://sindice.com/

[2] The Data Hub: http://thedatahub.org

[3] Vocabulary / Ontology Alignment on Wikipedia: http://en.wikipedia.org/wiki/Ontology_alignment

Further Reading

[bewerken]

- Second International Workshop on Consuming Linked Data: http://km.aifb.kit.edu/ws/cold2011

- Semantic Web for the Masses, paper by Lisa Goddard: http://firstmonday.org/htbin/cgiwrap/bin/ojs/index.php/fm/article/view/3120/2633

- Linked Data: The Future of Knowledge Organization on the Web: http://www.iskouk.org/events/linked_data_sep2010.htm

- Linked Data: Evolving the Web into a Global Data Space, Heath & Bizer: http://linkeddatabook.com

Linked Open Data Tool Box Collection

[bewerken]

The Linked Open Data Tool Box Collection provides a list of important software tools and services for LOD publishing and consumption.

- PoolParty Product Family: http://www.poolparty.biz

Services and tools for LOD-based metadata management, enterprise search, text mining and data integration

- LOD2 Technology Stack: http://lod2.eu/WikiArticle/TechnologyStack.html

Technology stack for LOD by the R&D project LOD2 – Creating Knowledge Out of Interlinked Data

A link discovery framework for the web of data

Link discovery framework for metric spaces

- Virtuoso Universal Server: http://virtuoso.openlinksw.com

Universal server for Linked Data consumption, storage and retrieval

- Callimachus Project: http://callimachusproject.org

A framework for data-driven applications using Linked Data

- Sindice: http://sindice.com

The semantic web Index

- RDF Alchemy / The LOD Manager: http://www.semanticweb.at/linked-data-manager

Management suite for scheduling alignment, caching and

updating mechanisms for LOD

A good resource for LOD projects and suppliers (in the domain of eGovernment) is W3C‘s community directory, which was launched in late 2011: http://dir.w3.org

[EF1]Zie PDF dit zijn 5 tabelletjes. In de PDF pag 18 en 19