Boek/Hage

< BoekMapping Statistical Linked Data: A Tutorial on Combining Linked Open Data and Tabular Data in R

Auteur

Willem Robert van Hage (SynerScope)

Introduction

[bewerken]

This chapter is a tutorial on data analysis. We will demonstrate, step by step, how to analyze a combination of linked data and non-linked data with the R statistical programming language. We will show how to join linked data with tabular statistical data. Specifically, we will be working with linked data from the Netherlands’ Cadastre, Land Registry, and Mapping Agency (Kadaster), about addreses and buildings, the Basisregistratie voor Adressen en Gebouwen (BAG); linked data from DBpedia, and non-linked tabular statistical data from the Statistics Netherlands (CBS). We will investigate geographical correlations between building properties and the socio-economical status of their inhabitants. Topics covered in this tutorial are: simple SPARQL endpoint interaction (Section 2), loading tabular CSV data (Section 3), preprocessing the data (Section 4), joining linked data with non-linked data by a shared key (Section 5), basic data exploration and statistics (Section 6), aggregation (Section 7), and geographical visualization (Section 6 and 7).

Importing Linked Data

[bewerken]

To start with this tutorial, you will need a running setup of the R programming language (R, http://r-project.org). We advise you to use the RStudio graphical user interface for R (RStudio, http://rstudio.org), which provides many useful editing features. Inside R, we will make use of two contributed libraries, SPARQL and ggmap, that respectively provide access to SPARQL end-points over the Web, and plotting of data on Google Maps. These libraries can be installed automatically from inside R by typing the following command inside R:

> install.packages(c('SPARQL','ggmap'),dependencies=TRUE)

Once these libraries are installed they can be loaded using the library function.

> # load the SPARQL and ggmap libraries

> library(SPARQL)

> library(ggmap)

Now that we’ve prepared our system and loaded the necessary libraries we can get started with loading the data from the BAG SPARQL end-point using a SPARQL query. This can be done with the SPARQL function. We need to tell this function a few things: At which URL to get the data, and which query to use to get the data. We will define two variables to contain exactly these bits of information, so that we can pass these variables to the SPARQL function.

We can get the data from the BAG end-point at Geodan:

> endpoint <- "[http://lod.geodan.nl/BAG/sparql http://lod.geodan.nl/BAG/sparql]";

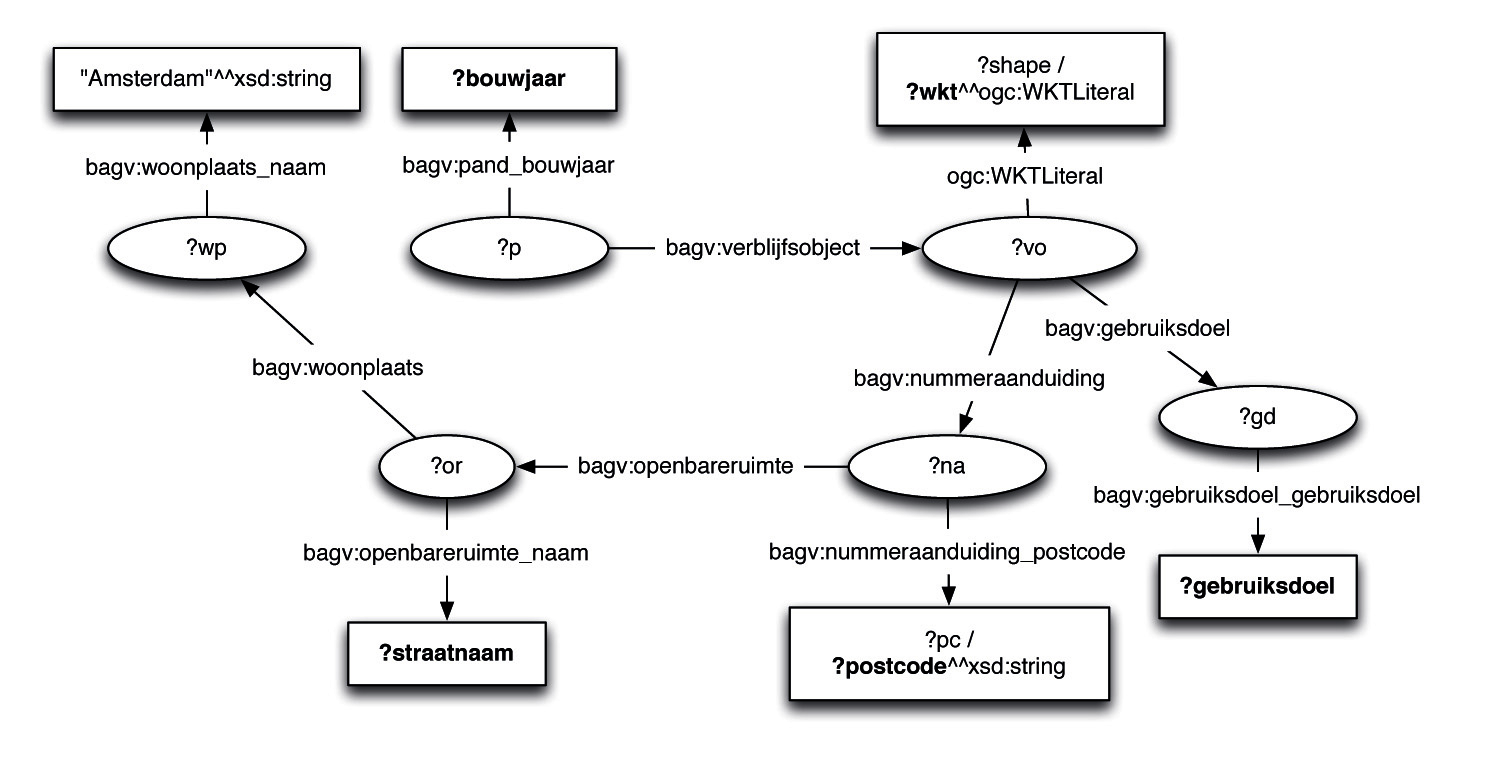

The data in the triple store behind the SPARQL store follows a schema defined by Geodan. The specific part of the data we will use in this tutorial is the link between the postal code (postcode), street name (straatnaam), usage (gebruiksdoel), the location (wkt, short forWell-Known Text geometry), and building year (bouwjaar). Per address, these are linked together in little graphs of nodes and edges. We will define a query that looks up occurrences of graph patterns that link them together and we will find one occurrence for each address in the store. To make this tutorial run, we limit ourselves to a subset of the addresses, specifically, those that are in the city of Amsterdam. This can also be specified as part of the SPARQL graph pattern query. In Figure 1 you can see a picture of the graph pattern that we will look up with the SPARQL query listed below.

> # define a SPARQL query to load data

> q <- 'PREFIX bagv: <[http://lod.geodan.nl/BAG/vocab/resource/ http://lod.geodan.nl/BAG/vocab/resource/]>

+ PREFIX bag: <[http://lod.geodan.nl/BAG/ http://lod.geodan.nl/BAG/]>

+ PREFIX ogc: <[http://lod.geodan.nl/geosparql_vocab_all.rdf# http://lod.geodan.nl/geosparql_vocab_all.rdf#]>

+ SELECT ?postcode ?straatnaam ?gebruiksdoel ?wkt ?bouwjaar

+ WHERE {

+ ?wp bagv:woonplaats_naam "Amsterdam"^^xsd:string .

+ ?or bagv:woonplaats ?wp ;

+ bagv:openbareruimte_naam ?straatnaam .

+ ?na bagv:openbareruimte ?or ;

+ bagv:nummeraanduiding_postcode ?pc .

+ BIND(str(?pc) AS ?postcode)

+ ?vo bagv:nummeraanduiding ?na ;

+ ogc:WKTLiteral ?shape .

+ FILTER(regex(str(?shape),"^[^<]"))

+ BIND(str(?shape) AS ?wkt)

+ ?p bagv:verblijfsobject ?vo ;

+ bagv:pand_bouwjaar ?bouwjaar .

+ ?vo bagv:gebruiksdoel ?gd .

+ ?gd bagv:gebruiksdoel_gebruiksdoel ?gebruiksdoel .

+ }'

The query can be any SPARQL statement (SPARQL, http://www.w3.org/TR/sparql11-query/). Detailed descriptions of SPARQL can be found on the Web. A brief discussion of the query we use in this tutorial follows.

Figure 1: Part of the RDF schema used in the Geodan RDF version of the BAG that we will extract using SPARQL.

The PREFIX statements declare abbreviations we can use in the query to specify the URIs of the graph edges, which represent properties of things. For instance, we can write bagv:woonplaats instead of http://lod.geodan.nl/BAG/vocab/resource/woonplaats. With the SELECT statement, which is analogous to the SQL SELECT statement, we delare which variables (the keywords that start with a questionmark) we want to return as columns of a result table. Each row in the result table will be a distinct match of the graph pattern specified in the WHERE expression. The WHERE expression contains a graph pattern in a concise notation, as well as additional variable bindings and filters. For example, on line four and five of the graph pattern we match a triple consisting of a property bagv:openbareruimte between two variables, ?na and ?or, and a property bagv:nummeraanduiding_postcode between ?na and ?pc. The BIND statement selects the string value of the postal code captured in the variable ?pc, which contains a typed string (e.g. we select ‘1081HV’ from ‘1081HV’^^xsd:string). The FILTER statement three lines below states that we want shapes that do not start with an opening bracket (‘<‘), which in the case of the Geodan version of the BAG separate WGS84 coordinates from coordinates in the Dutch coordinate system Rijksdriehoeksmeting.

Now that we have a specification of which variable instantiations we want to gather obeying which pattern in the form of a SPARQL query, we can send the query to the input with the SPARQL function. The table is stored in R as a data frame called bag_res.

> # fire the query at the SPARQL end-point

> bag_res <- SPARQL(url=endpoint,query=q)$results

To demonstrate what comes back we use the head function to show the first three lines of the result table.

> # show the first lines of the resulting data frame > head(bag_res,n=3) postcode straatnaam gebruiksdoel 1 1019GM Vriesseveem overige gebruiksfunctie 2 1019GW Jollemanhof kantoorfunctie 3 1019GW Jollemanhof kantoorfunctie wkt bouwjaar 1 POINT(4.91891809473891 52.3773045855061) 2005 2 POINT(4.91978793944898 52.376984465474) 2005 3 POINT(4.9198026246807 52.3769845234389) 2005

The first line contains an address with postal code 1019GM in the street Vriesseveem with a aspecific usage (gebruiksdoel), the other two are offices in the street Jollemanhof. All three addresses are in a building built in 2005.

Importing Tabular Data

[bewerken]

In the previous section we demonstrated how to get tabular data from a triple store using the SPARQL package for R. A much simpler way to read and write data frames is character separated files with the read.table and write.table functions in R. We will quickly show how this is done by loading a file, ‘Eindbestand SEC.dat’ from the CBS on social economical classification of addresses (CBS SEC, http://bit.ly/146jcfH). This file contains tab separated values per row delimited by newline characters. Missing data values are indicated with the letter x. We can specify that the first line contains column names and that we would like to treat the missing data values as ‘Not Available’ values in R. These are displayed differently for numerical values (‘<NA>‘) and categorical values (‘NA’).

> # load Social Economic figures from the CBS from a CSV table file

> sec <- read.table("Eindbestand SEC.dat",

+ header=TRUE,sep="\t",na.strings="x")

> head(sec,n=3)

pc6 gemeentecode Inkomensontvangers Laaginkomen Hooginkomen

1 1011AB 363 NA <NA> <NA>

2 1011AC 363 10 <NA> <NA>

3 1011AE 363 NA <NA> <NA>

Uitkeringsontvangers Zelfstandigen Fiscaalmaandinkomen

1 NA NA NA

2 NA NA 2300

3 NA NA NA

You can see that this table contains a postal code column, pc6, with compatible values to the postcode column in the BAG. In Section 5 we will join these two data frames into a single data frame with all the values on this shared column.

Caching SPARQL results as Tabular Data

[bewerken]

You can use the table reading and writing functions to store SPARQL result tables in a character separated file as follows.

> # write results to a cache file

> write.table(bag_res,file="amsterdam_bag_res.tsv",sep="\t")

> # read from a cached result file

> bag_res <- read.table("amsterdam_bag_res.tsv",+ header=TRUE,sep="\t")

This saves you the latency of Web lookups and the parsing of SPARQL result XML files. Also, it allows you to keep working when Web access is unavailable, and it reduces the stress on the SPARQL server.

Data Preprocessing

[bewerken]

Quite often when loading data from third parties, you will run into data representations that are different from how they are needed for analysis. In this case, we would like to plot points on a Google map using the ggmap package, which requires vectors of latitude and longitude coordinates. Our data is supplied in WKT shapes. We we use a quick and dirty way to get the coordinates out of the WKT strings. We simply useregular expression substitusion to get the coordinates out of the WKT strings. A more robust, but slower, way would be to use a WKT parser, like the parser in the rgeos package.

> bag_res$long <-

+ as.numeric(gsub("POINT\\((\\S+) (\\S+)\\).*","\\1",bag_res$wkt))

> bag_res$lat <-

+ as.numeric(gsub("POINT\\((\\S+) (\\S+)\\).*","\\2",bag_res$wkt))

In the CBS data the letter x was used for missing values. In the BAG we see that the number 9999 is used to indicate unknown building years. Also we noticed that the building years below 1850 are suddenly very sparse and that the number 1005 is used for unknown early dates. To make visualization easier (the few old buildings upset the coloring if we want a color gradient over time) we restrict the range of viable building years to everything since 1850. We treat every year below that and the year 9999 as ‘Not Available’ by replacing these years by NA.

> # interpret the building date 9999 as "Not Available" > bag_res[which(bag_res$bouwjaar == 9999 | + bag_res$bouwjaar < 1850),]$bouwjaar = NA

[bewerken]

In this section we will join the BAG data with the CBS socio economical classification data by postal code. This enables us to combine, for example, the average monthly income from the CBS (smoothed numbers for anonymization) with the geocoordinates and building years from the BAG. We can join two data frames in R with the merge function. We simply call it on the two data frames and specify which column from each of them to match the rows by. This means, if a value in the CBS SEC pc6 column literally matches a value in the BAG postcode column, the two rows are concatenated.

> # perform an inner join between the CBS and BAG data on postal code > sec_bag <- merge(sec,bag_res,by.x="pc6",by.y="postcode") > head(sec_bag,n=2) [table, alles rechts uitlijnen] pc6 gemeentecode Inkomensontvangers Laaginkomen Hooginkomen 1 1011AB 363 NA <NA> <NA> 2 1011AB 363 NA <NA> <NA> Uitkeringsontvangers Zelfstandigen Fiscaalmaandinkomen straatnaam 1 NA NA NA De Ruijterkade 2 NA NA NA De Ruijterkade gebruiksdoel wkt bouwjaar long 1 woonfunctie POINT(4.90560744056207 52.3777814734737) 1916 4.905607 2 kantoorfunctie POINT(4.90578386133317 52.3777642154535) 1916 4.905784 lat 1 52.37778 2 52.37776

In this case the match is a literal equality match, but it is quite possible to use merge in combination with subset and a custom similarity function to do approximate matching. This is shown in detail in a tutorial on the Web by Tony Hirst (5R-bloggers, http://bit.ly/15gnjoQ).

Data Analysis

[bewerken]

In this section we will explore the CBS and BAG data. We will start by plotting the building year distribution from the BAG and the average monthly income from the CBS data to get an overview. Then we will exploit the join over the postal code to see whether or not there is a correlation between the two. This will answer the question: ‘Do rich people live in new buildings in Amsterdam?’.

Visualizing Distributions

[bewerken]

In R you can make a histogram of values with the hist function. You can specify the bin size, i.e. the aggregation interval or the width of the bars, with the breaks parameter.

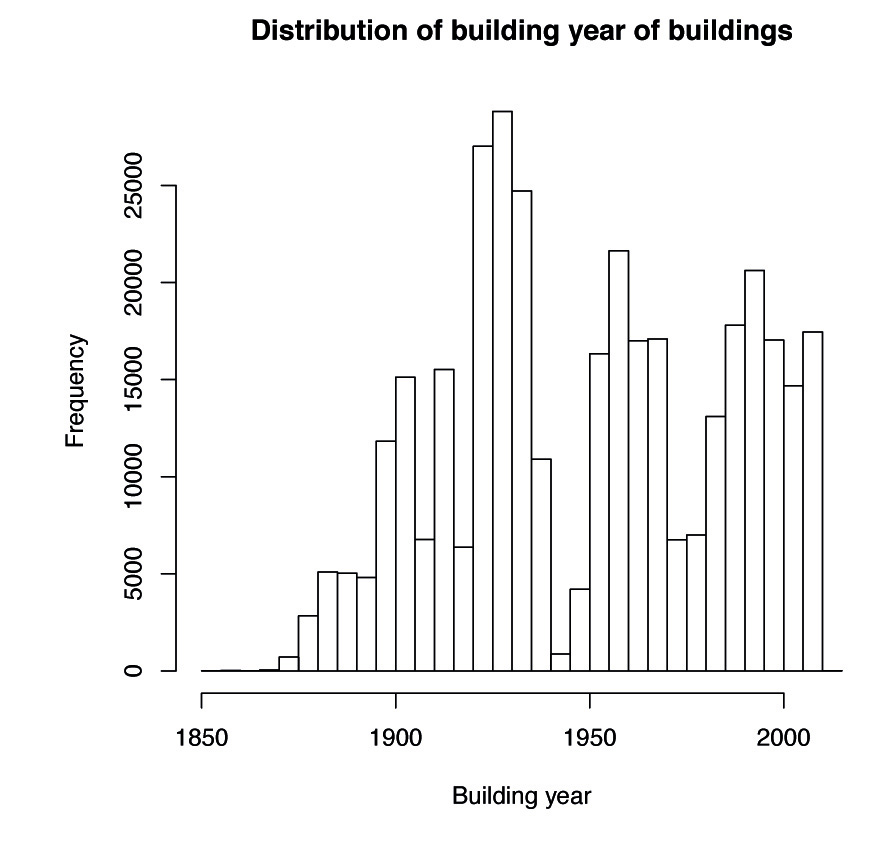

> # overview of building years > hist(sec_bag$bouwjaar, + xlab="Building year", + main="Distribution of building year of buildings", + breaks=seq(1850,2015,by=5))

As you can see in Figure 2, big urbanization started at the end of the 19th century and

there are clear dips in the second world war and the crisis of the 1970s.

Figure 2: Distribution of building years.

Now we will do the same with average monthly income. When bin sizes are not

supplied, R decides for itself how to bin the data.

> # overview of income per month > hist(sec_bag$Fiscaalmaandinkomen, + xlab="Fiscal income per month", + main="Distribution of income")

In Figure 3 notice social security at the bottom of the distribution and the cap set at 10000 euro per month.

Statistical Correlation

[bewerken]

If rich people live in new buildings then rows with a high income also have a high building year. By joining the tables on postal code we can simply select the column containing monthly incomes and the column with building years, because these will match up according to postal code. To compute a Pearson correlation (r) in R, you can simply use the cor function on these two vectors. A slight complication is that some values, both in the building year column and in the income column, are not available.

These are not necessarily in the same rows. To do a fair correlation test, we leave out all rows with unavailable data in either column. This does introduce a non-response bias, but there is no simple way around this methodological problem. A proper treatment of missing data falls outside of the scope of this tutorial.

> # compute correlation between monthly income and building date,

> # ignoring incomplete data

> cor(sec_bag$Fiscaalmaandinkomen,sec_bag$bouwjaar,use="complete.obs")

Figure 3: Monthly income distribution.

As you can see, the correlation is slightly negative. We can not conclude there is any correlation. To illustrate this, let’s plot income and building year on the map to see where the rich people live and where the new buildings are.

Mapping the Data

[bewerken]

To plot values on a map, you can use the qmap function from the ggmap package. This requires you to specify which coordinates to put on the x and y axis of the map plot. qmap uses Google maps, which in turn use WGS84 coordinates, so the x and y coordinates have to use latitude and longitude as well. The ggmap package uses the Grammar of Graphics (GOG) pradigm to combine visualization type, data specification, and styling in a modular way. The implementation of GOG in R overloads the + operator to “add” up these different aspects of a graphical visualization.

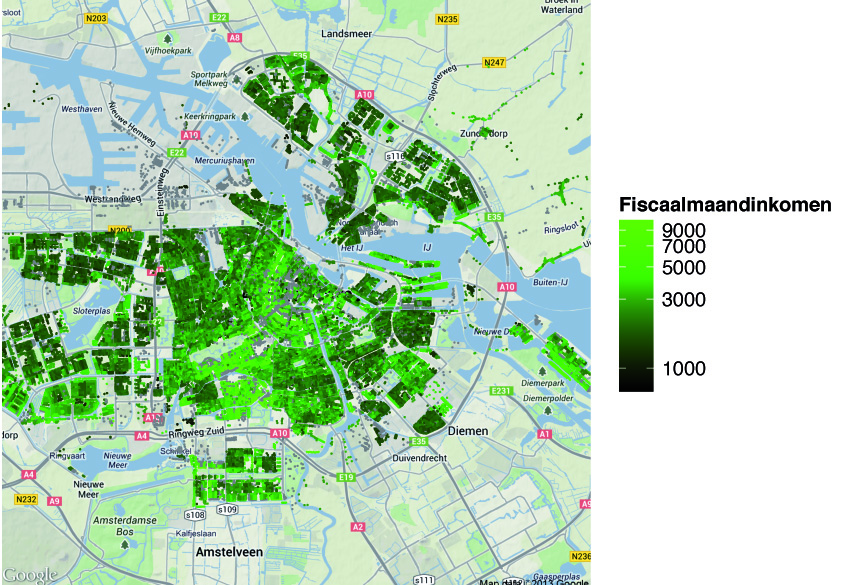

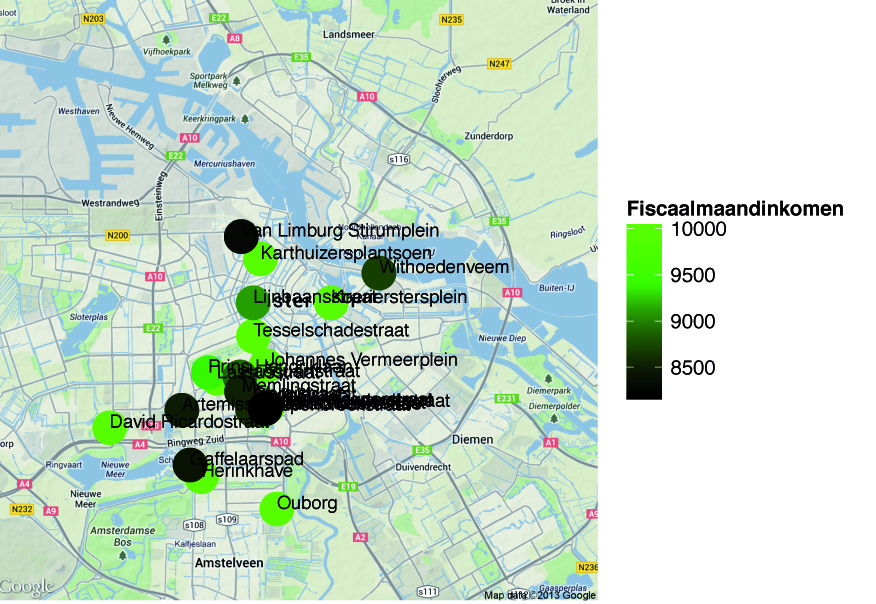

In Figure 4 you can see that the rich people prefer to live near to the water, but also in the south of the central part of Amsterdam, “Amsterdam zuid”, specifically in the area south of the Vondel park. We transformed the income logarithmically, to get more definition in the coloring of the higher incomes. If we do the same visualization, but with a square root transform to get a better definition of the coloring of the newer buildings.

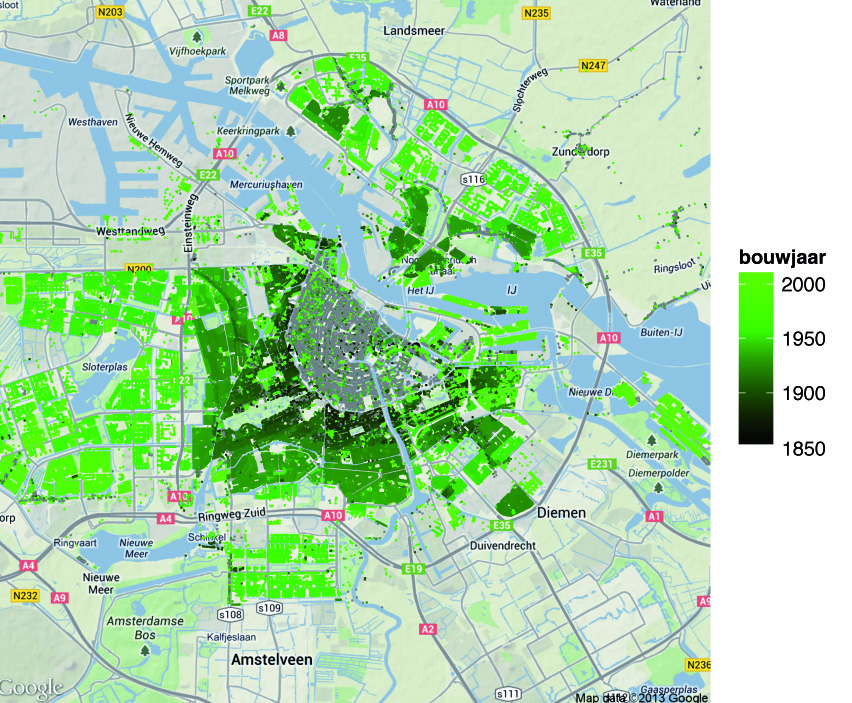

> qmap(location='Amsterdam', zoom=12) +

+ geom_point(aes(x=long,y=lat,colour=bouwjaar),

+ data=sec_bag,size=0.5) +

+ scale_colour_gradientn(colours=c("black","green"),trans="sqrt")

Figure 4: Monthly income per address, unavailable data are shown in gray.

Figure 5: Year at which the building of each address was built, unavailable data are shown in gray.

In Figure 5 you can see that Amsterdam clearly grew from the inside out, and that the buildings on the harbor islands are the newest developments. This in combination with Figure 4 shows why there is no conclusive correlation result. Rich people live in the new harbor estates, but also in the old area in Amsterdam zuid, south of the Vondelpark.

Data Aggregation

[bewerken]

Until now we have dealt with concrete, unaggregated data. However, if we want to answer questions like ‘Which streets have the highest average income?’, we have to average the income over the addresses per street. There are a few ways to accomplish this in R, such as the wonderful plyr and reshape packages, but here we will show the simplest way to aggregate data, the built-in aggregate function. The following code applies the mean function to all values in the Fiscaalmaandincome column with the same straatnaam.

> # aggregate the location and income by street name

> agg_inkomen <- aggregate(Fiscaalmaandinkomen ~ straatnaam,

+ data=sec_bag,mean)

> head(agg_inkomen,head=5)

straatnaam Fiscaalmaandinkomen

1 Aaf Bouberstraat 1819.643

2 Aagtdorperpad 2600.000

3 A.A.H. Struijckenkade 1700.000

4 Aakstraat 2342.105

5 Aalbersestraat 1599.673

6 Aalsmeerplein 2700.000

We can do the same aggregation on latitude and longitude to calculate the centroids of the streets. These can then be attached to the aggregated income values with the merge function we introducted in Section 5.

> # aggregate geocoordinates by street name > # and join with the aggregated income > agg_lat <- aggregate(lat ~ straatnaam, data=sec_bag,mean) > agg_long <- aggregate(long ~ straatnaam, data=sec_bag,mean) > agg_street <- merge(agg_inkomen, + merge(agg_lat, + agg_long, + by="straatnaam"), + by="straatnaam") > head(agg_street,n=5) straatnaam Fiscaalmaandinkomen lat long 1 Aaf Bouberstraat 1819.643 52.35684 4.820258 2 Aagtdorperpad 2600.000 52.39764 4.956488 3 A.A.H. Struijckenkade 1700.000 52.38203 4.812593 4 Aakstraat 2342.105 52.40763 4.911949 5 Aalbersestraat 1599.673 52.37904 4.797936

Let’s look at the top-25 richest streets of Amsterdam. We can sort a data frame by the values of a column with the order function. If we need them in decreasing order, we can simply add a minus before the column name.

> # show the top-25 streets with the highest average income > top_agg <- head(agg_street[order(-agg_street$Fiscaalmaandinkomen),],+ n=25) > top_agg straatnaam Fiscaalmaandinkomen lat long 487 Chopinstraat 10000.000 52.34760 4.879134 589 David Ricardostraat 10000.000 52.34157 4.826554 1150 Herinkhave 10000 10000.000 52.33059 4.860133 1151 Herman Gorterstraat 10000.000 52.34648 4.883591 1433 Johannes Vermeerplein 10000.000 52.35545 4.883748 1505 Karthuizersplantsoen 10000.000 52.37931 4.881732 1562 Koerierstersplein 10000.000 52.36949 4.907647 1680 Lassusstraat 10000.000 52.35265 4.865783 2165 Ouborg 10000.000 52.32339 4.887754 2442 Richard Wagnerstraat 10000.000 52.34656 4.880149 2500 Rossinistraat 10000.000 52.34574 4.879246 2788 Tesselschadestraat 10000.000 52.36204 4.879180 3027 Verdistraat 10000.000 52.34791 4.880510 3225 Willem Royaardsstraat 10000.000 52.34627 4.884454 657 Diepenbrockstraat 9531.250 52.34542 4.882581 2371 Prins Hendriklaan 9390.323 52.35390 4.862630 1733 Lijnbaansstraat 9100.000 52.36950 4.879062 1057 Hacquartstraat 8890.000 52.35293 4.874257 3250 Withoedenveem 8700.000 52.37615 4.925104 265 Bernard Zweerskade 8588.235 52.34563 4.878846 1904 Memlingstraat 8515.789 52.34969 4.874836 154 Artemisstraat 8478.947 52.34548 4.852965 929 Gaffelaarspad 8400.000 52.33324 4.855981 2979 Van Limburg Stirumplein 8300.000 52.38431 4.874713 1043 Guido Gezellestraat 8200.000 52.34585 4.883473

As a final visualization task, let’s plot the top-25 richest streets, labeled with their street name, on the map, rescaling the dots to make them more visible.

> # The top-25 highest income streets plotted on the map > qmap(location='Amsterdam', zoom=12) + + geom_point(aes(x=long,y=lat,color=Fiscaalmaandinkomen,size=1), + data=top_agg) + + scale_colour_gradientn(colours=c("black", "green")) + + scale_size(range=8,guide="none") + + geom_text(aes(x=long,y=lat,label=straatnaam), + data=top_agg, + size=3,vjust=0,hjust=0)

Conclusion

[bewerken]

In this tutorial we have treated how to load Linked Open Data from a SPARQL endpoint into R and how to join it with non-linked data from tabular files, such as tabseparated value files, to combine knowledge from multiple sources to answer questions that could not be answered with one data set alone. We have treated basic distribution exploration, correlation between two properties with missing values, and visualization of point data on a map. Finally, we have shown how to do simple aggregation in R.

Figure 6: Monthly income aggregated at street level. Shown are the 25 streets with the highest average income.

The data and the R code used for this tutorial (in Sweave format) can be found on the Web, along with other tutorials on SPARQL and R, in the tutorials section of the Linked Science weblog: http://linkedscience.org/.

Acknowledgements

[bewerken]

Thanks go to Jorik Blaas, Niels Willems, Jesper Hoeksema, for their support with the triple store, data gathering, and visualization.

References

[bewerken]

- R; http://r-project.org

- RStudio: http://rstudio.org

- SPARQL: http://www.w3.org/TR/sparql11-query/

- CBS SEC: http://bit.ly/16EoKRb

- R-bloggers: http://bit.ly/15gnjoQ